There is a split happening right now in software, and I do not think it is mainly between AI believers and AI skeptics. The deeper split is between different kinds of builders doing different kinds of work under very different levels of risk. I have seen both sides of it. Having helped develop these tools at GitHub, I have had some amazing results with AI coding systems. I am squarely in the “vibe coding is useful” camp. I have also felt the review burden and operational anxiety that comes with giant AI-generated changes landing faster than people can responsibly inspect them. In my last post I argued that engineers need fluency across a spectrum of AI-assisted development modes. But that assumes the builder is an engineer. What happens when the builder is not?

AI is not just changing how engineers work. It is changing who gets to build.

The Dream That Always Has Been

I am a child of 90s hacker culture, really a hippie hacker mashup at heart. I first stumbled onto HyperCard at school during typing class when I was 12 and fell in love. I would build little games and was completely taken by how easy it was to create something interactive on a computer. In my opinion HyperCard was one of the best things Apple ever built.

That feeling, the thrill of making a machine do what you imagined, has always been part of the dream. Grace Hopper pushed for code and human language to converge. Xerox PARC worked on it. Seymour Papert built Logo so children could think with computers. Every generation has had people trying to lower the barrier between having an idea and making it real.

And every generation of professional developers has, often inadvertently, raised that barrier back up. That enabled us to build amazing things, and I love that, but it also alienated many people from trying things that were once simple. What was once a small HTML page with some inline PHP became tens of thousands of lines of code, multiple databases, multiple build systems, and several frameworks you needed to know just to get started.

We got very far away from the dream of everyone being empowered to build.

This complexity pushed out a group of people who once built for the internet, and I think now they are coming back. AI is bringing that possibility back, and the reaction from the engineering community tells you everything about the split.

Two Legitimate Experiences

The discourse right now is remarkably polarized, and both sides are telling the truth about what they are experiencing.

On one side, non-technical founders are building and shipping real products with vibe coding tools. That matters. Software and computers are incredibly powerful tools for solving problems, and we now have both deterministic and non-deterministic problem-solving tools at our fingertips. Everyone should be empowered to solve their problems with these tools. In my opinion they belong to everyone, as is the spirit of the open source movement.

Y Combinator reported that 25% of their Winter 2025 batch had codebases that were 95% AI generated. Universities like the University of Cincinnati’s 1819 Innovation Hub are teaching vibe coding in their innovation labs. I have talked to professors like Geoffrey Challen who are using AI to build interactive graphical interfaces that teach complex algorithms to students at scale. Small nonprofits can build the intake tools they have needed for years. Teachers can build one-off tools to help them teach. Farmers can build tools to help them run their farms.

On the other side, the evidence for serious problems is mounting fast. Security is not built in. DryRun Security’s Agentic Coding Security Report found that 87% of AI-generated pull requests contained at least one security vulnerability. An engineer accidentally destroyed his production database when Claude Code executed a Terraform destroy on a live environment. Amazon convened an emergency engineering meeting after a string of outages, and internal documents originally cited Gen-AI assisted changes as a factor. CodeRabbit found AI co-authored code contained 1.7x more issues than human-written code. The 2025 Stack Overflow Developer Survey found that 46% of developers actively distrust AI output and only 3% highly trust it.

Both of these realities are true simultaneously.

The developer who vibe coded a working SaaS product in a weekend and the engineer whose production database got wiped are not having the same experience because they are not doing the same thing. They are operating at entirely different levels of complexity, risk, and consequence, and yet we keep talking about them as if they are in the same conversation.

As I wrote in my Power Tool Spectrum post, the right tool depends on the risk. A weekend SaaS prototype and a production infrastructure migration are not the same level of risk and should not be treated with the same level of rigor. Right now we do not have good systems for matching risk to rigor, and we do not have the guardrails in place to make even high-risk work safe. That is a symptom of the split.

Complexity was a trust signal

For a long time now we have had a reasonable feeling that software quality could be aligned with trust. Building software that worked well and looked good was complex and expensive, and that cost was a signal. It was imperfect, anyone who has dealt with their state DMV website knows that software can be costly and still terrible, but the signal was real enough to rely on most of the time. Now that bar is moving. Someone in their living room can produce a webpage as polished as the best Apple website with no real engineering behind it. They may mean no harm, but they can cause immense harm through sheer incompetence. And bad actors are just as enabled, a convincing, professional-looking application is now nearly free to produce. Deception used to be easy to identify, now deception is easy and nearly free. Without a quality trust signal, where does that leave us? Large capable institutions will continue to earn trust through brand recognition and accountability, the same way they always have. But we have a new problem. How does a user trust the small business with a dream? How do we enable the teacher, the farmer, the nonprofit director to build software that their users can actually trust? The answer is new trust signals. We need the people who understand how to build safe, scalable systems to build the tools that make trust legible — and to make sure the good actors have the best possible outcomes for society. Platform-level certification. Automated security checks surfaced to users, not buried in developer dashboards. Something like a building inspection certificate for software. The signal has to be explicit now, because the old one is gone.

The Problem With One Title

Job responsibilities are different in a way that often is not captured in these online quarrels. Every profession has people working at different levels of ability who take on tasks of different complexity with different tools.

Software somehow ended up flattening all of this into one title. We treated all coding as engineering because for a long time engineering really did matter that much and the tools really did require that depth. Now LLMs have up-leveled everyone and the mismatch is obvious. People without that background are shipping real things, and we do not quite know what to do with that.

We also bundled too many different kinds of software work under one professional expectation, requiring depth that was necessary for some tasks but excessive for others. When everyone is called a software engineer, the performance game kicks in. People build with unnecessary complexity to justify the title. We get resume-driven engineering where technology choices are about career advancement, not solving the problem. And people doing perfectly good work at a different level of complexity feel like impostors.

We created a lot of anxiety by pretending everyone was playing the same game.

A Layered System for a New Era of Builders

I think we need to build, deliberately and not accidentally, a layered professional ecosystem where each layer exists in service of the people it supports.

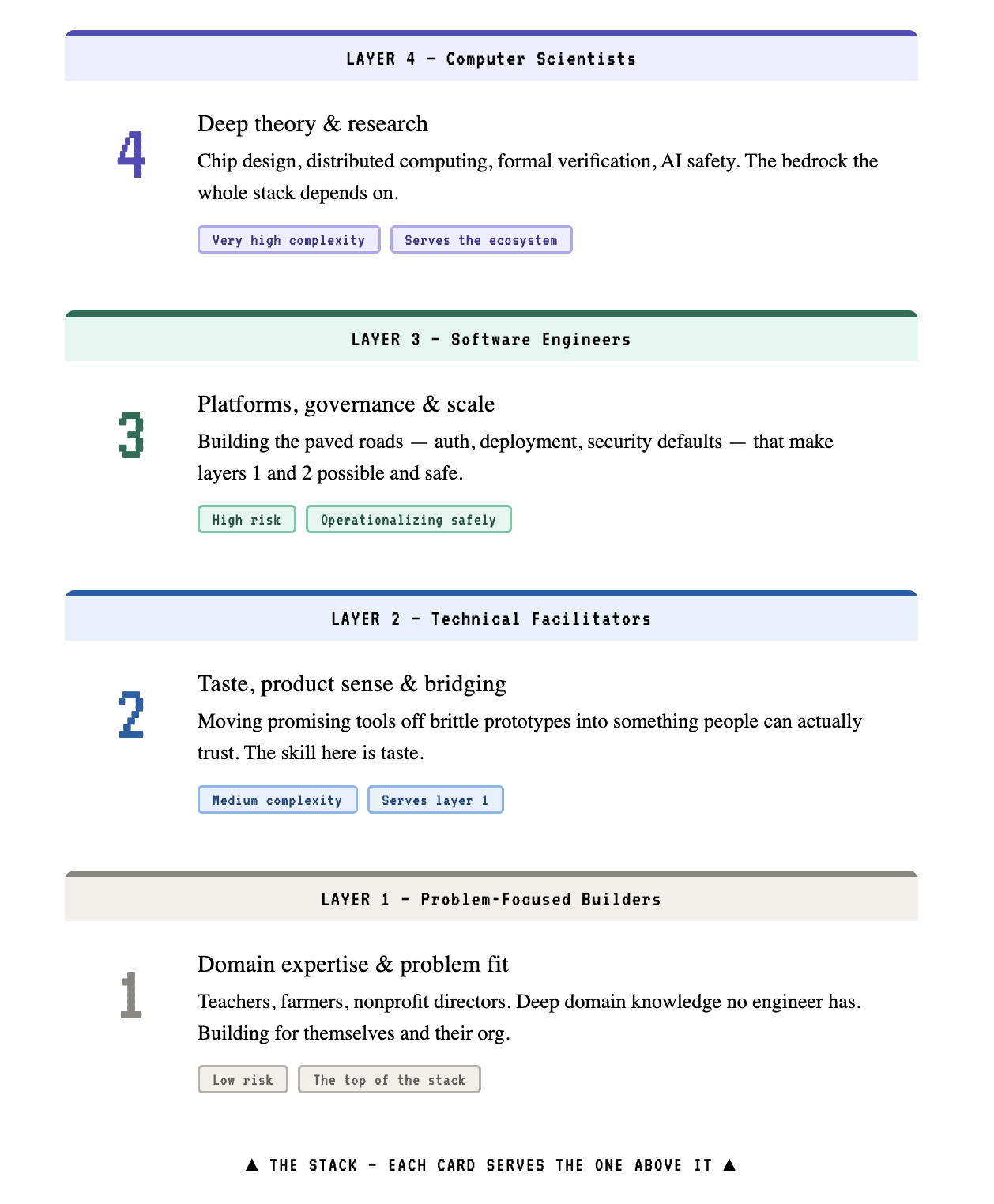

| Layer | Role | Risk | Complexity | Key Skill | Serves |

|---|---|---|---|---|---|

| 1 | Problem-Focused Builders | Low | Low | Domain expertise | Themselves, their org |

| 2 | Technical Facilitators | Medium | Medium | Productizing and bridging problem to tech | Layer 1 builders |

| 3 | Software Engineers | High | High | Platforms, judgment, governance, scale | Layers 1 and 2 |

| 4 | Computer Scientists | Very High | Very High | Deep theory, research | The entire ecosystem |

Layer 1: Problem-Focused Builders

These are the teachers, farmers, nonprofit directors, small business owners, and community organizers who have real problems and can now solve them with AI-assisted tools. They are not trying to become software engineers. They do not need to understand distributed systems, cloud networking, or database normalization. Classically we limited this group to spreadsheets, but now we are entering an era where they can build real software products without writing a line of code by hand. That is a huge empowerment and full of risk, we must support them.

The goal of Layer 1 is not to turn everyone into an engineer. The goal is to let people build and ship useful software safely within a platform designed for that purpose.

These builders bring something no engineer has: deep domain expertise. The teacher knows what her students struggle with. The farmer knows the crop rotation problem. The nonprofit director knows the intake workflow. What they need is not a CS degree. What they need is problem decomposition, basic security awareness, better defaults, and some training in software design, HCI, and data organization.

Layer 2: Producers

These are the people who help successful Layer 1 projects grow up.

They take something built inside a constrained vibe-coding or builder platform and help move it onto a more flexible SaaS-like foundation without losing the original problem fit. Think of them as the general practitioners of software, or perhaps music producers are a good analogy. They understand the domain, they can work effectively with AI tools, and they have enough architectural, design and product sense to reshape a promising tool without over-engineering it. The skill to have here is taste; the ability to shape a product in a world of infinite flexibility into something that people truly enjoy, and that is safe for them to use.

This role already exists informally. It is the consultant helping a school system operationalize a successful internal tool. It is the technical product-minded builder helping a nonprofit move off a brittle prototype. It is the IT person who can take a useful internal hack and make it usable by an entire department.

Technical facilitators need practical architecture, security fundamentals, deployment knowledge, and the judgment to know when to escalate. They can read and write code well enough to direct AI effectively, refactor safely, improve data models, and make systems scale organizationally without immediately turning them into enterprise theater.

Layer 3: Software Engineers

Layer 3 is where the real leverage sits.

Software engineers are not just writing application code. They are building the platforms, guardrails, abstractions, and production systems that make Layers 1 and 2 possible. They create paved roads that let non-engineers ship useful tools without needing to understand the technical intricacies of doing this well. They also create the more flexible platforms that Layer 2 can use when a tool outgrows its original environment.

When code is cheap and abundant, the scarce skill is not writing it. It is operationalizing it safely.

Software engineers become the scaling, governance, and quality layer. They refactor codebases when vibe-coded projects succeed and need to grow. They design deployment infrastructure that makes it safe for non-engineers to ship code. They create authentication systems that just work. They build the roads, bridges, and water purification systems of the software world.

Layer 4: Computer Scientists

This is where CS departments need to refocus. Not on churning out masses of programmers, AI is increasingly filling that function, but on the genuinely hard problems that require deep theoretical understanding: chip design, distributed computing, energy efficiency, quantum computing, formal verification, and the science of making AI coding tools themselves better and safer.

A large share of CS enrollment has long been driven less by love of computer science as a discipline and more by software engineering’s economic upside. That is fine. Those people now have clearer paths through Layers 2 or 3 without pretending they need to study compiler theory. And CS can return to being, well, science.

A Teacher’s Tool Moving Up the Ladder

Imagine a teacher builds a small AI-assisted reading feedback tool for her classroom. It helps students summarize what they read and generates practice questions based on the lesson. She builds it on top of a safe, opinionated platform created by Layer 3, something with hosting, permissions, authentication, and basic security already built in. She does not need to become a software engineer to get it into production for her classroom. That is the point.

At Layer 1, the teacher is the builder. She knows what students struggle with, what teachers actually need, and what makes the tool useful in practice. Her job is defining the problem, shaping the workflow, testing whether the results are good enough, and deciding whether the tool is worth using.

Then the tool gets popular. Other teachers want it. A principal wants it across the school. Soon the district is interested.

At Layer 2, a technical facilitator helps move the project off the original constrained builder platform and onto a more flexible product platform. Now the work is different: cleaning up the workflow, improving data handling, defining product boundaries, managing configuration across classrooms, and making sure the tool can serve many users without collapsing into prompt spaghetti.

At Layer 3, software engineers take responsibility for the deeper platform and for any parts of the system that have become real engineering problems: shared identity, district-wide permissions, student data protections, auditability, integrations, monitoring, reliability, scale, and governance.

At Layer 4, computer scientists may not work on this specific tool at all, but they improve the underlying capabilities the whole stack depends on: privacy-preserving methods, evaluation techniques, safer model behavior, and better systems for human-AI collaboration.

The point is not that the teacher failed and engineers had to take over. The point is that success changed the risk profile. As the tool moved up in consequence, responsibility moved up the ladder with it.

The Guardrails Question

The obvious objection to empowering non-technical builders is: what about security? What about quality? What about the disasters?

This is the right question, and it needs a real answer, not hand-waving about how AI will get better because AI is nearly good enough and we know it will get better. I think the answer is that platforms and paved paths to enable this change are the responsibility of Layers 2 through 4, not Layer 1.

Right now we are handing people the equivalent of a car with no seatbelts and no speed limiter and then blaming them when they crash. Deployment is still too hard. Security is still too hard. Authentication is still too hard. Scaling large systems is still too hard. We are dropping people into complexity they cannot reasonably be expected to manage.

People are building. They are not going to stop. It is our responsibility, as the engineers who understand these systems, to make sure the support systems exist. Not eventually. Now.

Why enable the masses

Some of the biggest problems in software right now are not purely engineering problems, they are human ones. Social networking that is not harmful to people. News sites that do not just run propaganda for profit. These are things engineers have been building, and we have not exactly crushed it. These are problems that need people with backgrounds in the humanities, journalism, social work, and education, people who understand the human side of these systems. Empowering those people to build is not just a nice to have. It might be part of how we fix some of the things we broke.

In the Cathedral and the Bazaar, one of the most poignant arguments is that the Cathedral (Big Businesses) does not do as good of a job at solving problems well as the Bazaar (Hordes of open source developers) is because the people making the solutions do not clearly understand the problems and therefore cannot solve them as well. This observation is exactly why we need to enable more people to be problem solvers. I long for the day when an expert at making friends can build a social network that actually helps me have better friendships.

We Are Scared, and That’s Okay

I was at a conference recently teaching a group of educators how to use AI agents to do very complex long-running builds, and someone said, what are we supposed to do? This feeling is very real. We live in a complex world. Capitalism punishes people for not having valuable skills. Going from valuable in a society monetarily to being replaced by a machine is awful. I told her there is a split coming, and left it at that, I was not sure how to articulate it and I hope I explain what I mean in the remainder of this piece.

I am reminded of what happened in medicine in the 1960s. Faced with a shortage of primary care doctors, the system created nurse practitioners. The medical establishment fought it hard. The role was viewed with alarm, suspicion, and distrust. Sound familiar?

But it worked. Doctors did not disappear. They specialized upward. Patients who would have had no access to care now had it. The system got better for everyone.

I believe something similar needs to happen in software. The layers I have described are not just an organizational framework. They are a map for where different kinds of skills and experience become more valuable, not less. If you are an engineer who understands the whole system, you are needed at Layer 3 more than ever. If you love solving deep technical problems, Layer 4 needs you. If you are the person who has always been good at understanding what people actually need and translating that into something buildable, Layer 2 is about to become one of the most impactful roles in tech.

The Apprenticeship Ladder

If juniors cannot get hired because AI is doing the work they used to learn from, where do tomorrow’s senior engineers come from?

I think the layered system helps here because each layer creates a natural apprenticeship model. You work within your level, building real things at the appropriate level of risk, and you get mentored by people in the layer above you who help you upskill based on the work you are already performing. A Layer 1 builder who gets curious about how the systems underneath work has a clear path into Layer 2. A Layer 2 producer who keeps running into scaling problems they cannot solve has a clear path into Layer 3.

The traditional path of write boilerplate, get promoted is disappearing. The layered system could replace it with something better: solve real problems first, then accumulate technical depth as the consequences grow. But we need business and universities to step up and support these new roles, that means new offerings beyond traditional CS degrees and more pathways from institutions like technical colleges.

What Happens Next

I do not have a crystal ball, but I do think there is a lot of work to do.

Education needs to split. The dream of everyone can code is here, but not everyone was ever or ever will be a CS major. Separate tracks need to emerge that map to the layers I have described. We need more domain experts exposed to these tools, more Layer 2 training in practical architecture and deployment, more software engineering programs focused on building safe systems, and CS departments refocused on hard science.

Businesses need to split work by risk. You would not fly in a vibe-coded airplane. Not every task in an organization carries the same risk, and not every task needs the same level of rigor. Matching the right layer of builder to the right level of risk is how organizations get the speed benefits of AI without the disasters we are already seeing pile up.

We also need an apprenticeship model. We are really bad at this. Mentoring is not apprenticeship. People need to be put into harder situations than they can handle with support systems that make growth possible.

And engineers need to build the tools that support every layer. The new builders are coming, and they need better deployment tools, better security defaults, and better ways to get code into production safely without understanding the entire stack. Right now we are handing people powerful AI coding tools and then expecting them to figure out CI/CD pipelines, cloud infrastructure, and authentication on their own. That is our job to fix.

I do believe all these roles will use AI, and I do believe AI will write a lot of their code. But I think how much code is created, how quickly, and with how much rigor will split.

The engineers who thrive will be the ones who stop seeing non-technical builders as a threat and start seeing them as the people they are building for.

The split is real. But it is not a war. It is a specialization event.

If we design it well, it is the thing the computing visionaries have been dreaming about since Grace Hopper first imagined a computer that could understand English. We as engineers have a new responsibility to make sure that vision is realized in a way that is safe, inclusive, and empowering for everyone.

This post is a continuation of How Engineers Actually Work With AI: The Power Tool Spectrum. For the tactical framework on choosing AI modes for engineering tasks, start there.

Sources

- DryRun Security, “The Agentic Coding Security Report” (March 2026). Evaluated Claude Code, OpenAI Codex, and Google Gemini building two applications from scratch. 143 security issues across 38 scans; 87% of pull requests contained at least one vulnerability.

- CodeRabbit, “State of AI vs Human Code Generation Report” (December 2025). Analysis of 470 open-source GitHub pull requests. AI-generated PRs contained ~1.7x more issues; security vulnerabilities appeared at 2.74x the rate.

- Stack Overflow, 2025 Developer Survey — AI Section. 49,000+ respondents. 46% distrust AI output accuracy; only 3% highly trust it; 66% cite “almost right” AI solutions as top frustration.

- Alexey Grigorev, “How I Dropped Our Production Database” (March 2026). Primary source post-mortem of the Claude Code / Terraform incident.

- Fortune, “An AI agent destroyed this coder’s entire database” (March 2026). Covers Grigorev incident and Amazon outages.

- University of Cincinnati, “Coding without code: How vibe coding rewrites the rules” (March 2026). 1819 Innovation Hub vibe coding curriculum.